Agent Smith is an open source AI coding agent.

Using Agent Smith is easy: Configure Agent Smith for accessing your ticket system. The pipeline will iterate the usual tasks you may be familiar with when running azure devops pipelines. Cloning your repo and execution of tasks. Certainly Agent Smith does something different. It analyses the codebase for the sake of generation of an implementation plan. When having this finished and persisted, it writes the code and runs tests. Finally it opens a pull request. This is done fully automated.

You say, you’ve seen that before, what’s the difference?

It runs on your infrastructure. Bring your own API key. You can choose between the common LLMs (Claude, OpenAI, or Gemini, local LLMs to come). There needs to be some configuration been done for your repository. And then you can let it run locally, in Docker, your K8s cluster, or as a GitHub Action.

The start of agent smith was obviously me having been curious about how good a more complex application can be built by an agent without me writing code. That lead to very a very structured approach and methodic in terms of prompts, api token efficiency and interaction with the AI coding assistant. Finally the same approach the agent uses on your tickets is the approach I am going to enhance Agent Smith. Have a look here in the agent smith repository.

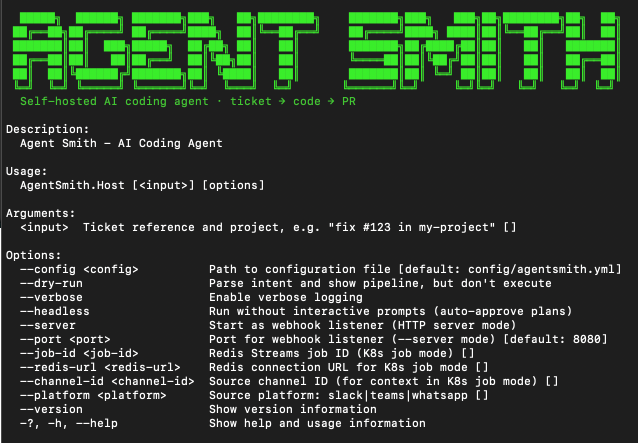

TL;DR — Try It

Docker:

docker run --rm \

-e ANTHROPIC_API_KEY="$ANTHROPIC_API_KEY" \

-e GITHUB_TOKEN="$GITHUB_TOKEN" \

-v $(pwd)/config:/app/config \

agentsmith:latest --headless "fix #42 in my-project"

Local:

dotnet run --project src/AgentSmith.Host -- "fix #42 in my-project"

Configure your project in agentsmith.yml, point it at your repo, and hit it. Configure for GitHub or Azure DevOps. GitLab and Jira are as well supported, but not that much tested by now. Nothing leaves your infrastructure except the LLM API calls.

The Shift

Here’s what I believe is happening right now, and why I built this.

The work of software development is shifting. It does not disappear in the next 6 months or to the end of the year like Elon just stated. But the development will be much faster. Spitting out code is going to be commodity. Everybody has his own thoughts of “let’s use this agent and get it done”. What kind of rules are applied? Is the developer going to review before doing a pull request? Are there pull requests at all?

How to capture the speed and keep great quality?

The answer Agent Smith proposes is writing precise tickets that a machine can act on. Defining coding principles that produce consistent output. Documenting architecture decisions as machine-readable context, not just human-readable afterthoughts. Reviewing and steering instead of line-by-line typing.

This requires more engineering discipline, of course. Yes, I know as well, writing tickets is no fun at all.

When the developer does not write the code anymore, what’s the task exactly?

The task from my point of view is governance. The developers are the guys with the knowledge. What breaks. What works. What really should be avoided. So there is no way around a straight and traceable methodic. The quality of your output no longer depends on how fast one types. It depends on how well one structure his intent.

Agent Smith is my attempt to put this into practice. Working as a freelancer for different companies on different systems, I would love to have something like this in place where I can work like this at least for the easy, repetitive or boiler plate code stuff. Finally with when the agents are going to evolve or multi agent support is done, than also more complex features are feasible. Obviously multi agents is a feature to come next.

There will always be vibe coding in the IDE. There will always be problems where nobody even considers an agent. But for well-scoped, ticket-driven work, I guess everybody has a lot of that in his backlog, the speed-up is real.

And what’s coming next makes it more interesting: interactive agents that ask clarifying questions in Slack or Teams, where the conversation happens where your team already works. Not fire-and-forget, but a dialogue at a higher level of abstraction.

Why Context Is Everything

The difference between an agent that produces garbage and one that produces usable code is not the model. It’s the context.

Agent Smith loads a coding-principles.md at runtime and injects it into every LLM call:

- Max 20 lines per method. No exceptions.

- Max 120 lines per class. Split when needed.

- SOLID principles. Dependency inversion everywhere.

- Additionally magic strings are disallowed. I didnt write any god classes since vba, so here I don’t want it as well.

These are the constraints the agent treats as non-negotiable. With these principles in context, the LLM produces small, focused classes with clean interfaces. Without them, there would be the same 500-line spaghetti that every model defaults to. Okay, we all know, even with the rules the agent can make it happen. But that is what PRs are for.

Coding principles alone aren’t enough. Agent Smith uses a full context stack:

Architecture prompts: In moment of writing there are 17 phases of implementation. All of these structured in the same way. The design documents define the domain, contracts, patterns, and boundaries. The agent is supposed to follow this architecture and methodic. All of the prompts are in the repo. Have a look. Let me know what you think.

Model registry: A cost-aware routing layer sends scout tasks (file discovery) to cheap, fast models and primary coding tasks to more capable ones. No need to burn expensive tokens on work that doesn’t need them.

Prompt caching and context compaction: System prompts get cached across agentic loop iterations. Depending on the task in question, the conversation can grow. This certainly affects the cost efficiency. To keep it as small and as cost efficient as possible Agent Smith compresses earlier context while preserving what matters. The agent can work on large codebases without blowing up the context window or your budget.

All of that is swapable. You can write different coding principles in different languages and use different models or providers. The config file drives everything.

How I Built It

I started doing it because I wanted to see how far I could get with it on one hand. On the other, I wanted to know how to optimize the agentic work without being integrated. That is the start of Agent Smith.

As an architect, loving coding, teaching coding principles.. yes being the guy of “professionally knows it better” (don’t take this too serious), I would like to have a straight methodic. I created a procedure in mind and started it. It went well.

I didn’t write a single line of code in Agent Smith

I didn’t think of products, I had some open source libs written in my life so let’s go for it again. There are plenty of AI coding tools, but most are either locked behind SaaS subscriptions or tightly coupled to a single platform. I wanted something self-hosted, provider-agnostic, and open. Something that can be pointed at own infrastructure and just run.

So I defined an architecture. Clean Architecture, DDD, command/handler pattern for the pipeline, provider abstractions for everything. Then I wrote the coding principles, the same coding-principles.md that Agent Smith now loads at runtime.

From there, I broke the work into phases. Each phase got its own structured prompt: domain entities, contracts, providers, factories, pipeline execution, CLI, Docker. One phase at a time, each building on the last. An AI coding assistant in the IDE did the implementation, I provided the context, reviewed the output, and steered.

After phase 8, I ran it for the first time. Agent Smith’s first task was to work on itself. I pointed it at its own repo and told it to implement Issue #1: “Add a README.” A few small fixes later, it worked. Actually, I considered that to be pretty scary. The agent cloned its own code, read its own architecture, and wrote its own documentation.

But anyway I was smiling. Some very small issues, mostly due to my not-so-big-amount-of-tokens in Claude API usage. But if just worked.

Three days and a few more phases later, it was running on a second provider. Azure DevOps instead of GitHub, Docker instead of local, headless mode. Pretty cool. Complete success on the first try. Amazing. And again pretty scary. PR had been created the ticket closed and it even posted a comment. The numbers from that run:

| Metric | Value |

|---|---|

| Scout model | claude-haiku-4-5 |

| Primary model | claude-sonnet-4 |

| Input tokens | 7,978 |

| Output tokens | 1,110 |

| Cache hit rate | 37% |

| Cost | fractions of a cent |

Looks pretty solid. Anyway the numbers need to appear in git when having executed this. But this is something for later implementations.

Have a lock at how to start it and the resulting log.

source .env && docker run --rm \

-e ANTHROPIC_API_KEY="$ANTHROPIC_API_KEY" \

-e AZURE_DEVOPS_TOKEN="$AZURE_DEVOPS_TOKEN" \

-v $(pwd)/config:/app/config \

-v ~/.ssh:/home/agentsmith/.ssh:ro \

agentsmith:latest \

--headless "fix #54 in agent-smith-test" 2>&1 info: AgentSmith.Application.UseCases.ProcessTicketUseCase[0]

Processing input: fix #54 in agent-smith-test

info: AgentSmith.Application.Services.RegexIntentParser[0]

Parsed intent: Ticket=54, Project=agent-smith-test

info: AgentSmith.Application.UseCases.ProcessTicketUseCase[0]

Running pipeline 'fix-bug' for project 'agent-smith-test', ticket 54

info: AgentSmith.Application.Services.PipelineExecutor[0]

Starting pipeline with 9 commands

info: AgentSmith.Application.Services.PipelineExecutor[0]

[1/9] Executing FetchTicketCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing FetchTicketContext...

info: AgentSmith.Application.Commands.Handlers.FetchTicketHandler[0]

Fetching ticket 54...

info: AgentSmith.Application.Commands.CommandExecutor[0]

FetchTicketContext completed: Ticket 54 fetched from AzureDevOps

info: AgentSmith.Application.Services.PipelineExecutor[0]

[1/9] FetchTicketCommand completed: Ticket 54 fetched from AzureDevOps

info: AgentSmith.Application.Services.PipelineExecutor[0]

[2/9] Executing CheckoutSourceCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing CheckoutSourceContext...

info: AgentSmith.Application.Commands.Handlers.CheckoutSourceHandler[0]

Checking out branch fix/54...

info: AgentSmith.Infrastructure.Providers.Source.AzureReposSourceProvider[0]

Cloning https://dev.azure.com/holgerleichsenring/agent-smith-test/_git/agent-smith-test to /tmp/agentsmith/azuredevops/agent-smith-test/agent-smith-test

info: AgentSmith.Infrastructure.Providers.Source.AzureReposSourceProvider[0]

Checked out branch fix/54 in /tmp/agentsmith/azuredevops/agent-smith-test/agent-smith-test

info: AgentSmith.Application.Commands.CommandExecutor[0]

CheckoutSourceContext completed: Repository checked out to /tmp/agentsmith/azuredevops/agent-smith-test/agent-smith-test

info: AgentSmith.Application.Services.PipelineExecutor[0]

[2/9] CheckoutSourceCommand completed: Repository checked out to /tmp/agentsmith/azuredevops/agent-smith-test/agent-smith-test

info: AgentSmith.Application.Services.PipelineExecutor[0]

[3/9] Executing LoadCodingPrinciplesCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing LoadCodingPrinciplesContext...

info: AgentSmith.Application.Commands.Handlers.LoadCodingPrinciplesHandler[0]

Loading coding principles from ./config/coding-principles.md...

info: AgentSmith.Application.Commands.CommandExecutor[0]

LoadCodingPrinciplesContext completed: Loaded coding principles (3524 chars)

info: AgentSmith.Application.Services.PipelineExecutor[0]

[3/9] LoadCodingPrinciplesCommand completed: Loaded coding principles (3524 chars)

info: AgentSmith.Application.Services.PipelineExecutor[0]

[4/9] Executing AnalyzeCodeCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing AnalyzeCodeContext...

info: AgentSmith.Application.Commands.Handlers.AnalyzeCodeHandler[0]

Analyzing code in /tmp/agentsmith/azuredevops/agent-smith-test/agent-smith-test...

info: AgentSmith.Application.Commands.CommandExecutor[0]

AnalyzeCodeContext completed: Code analysis completed: 1 files found

info: AgentSmith.Application.Services.PipelineExecutor[0]

[4/9] AnalyzeCodeCommand completed: Code analysis completed: 1 files found

info: AgentSmith.Application.Services.PipelineExecutor[0]

[5/9] Executing GeneratePlanCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing GeneratePlanContext...

info: AgentSmith.Application.Commands.Handlers.GeneratePlanHandler[0]

Generating plan for ticket 54...

info: AgentSmith.Application.Commands.Handlers.GeneratePlanHandler[0]

Plan generated: Create a MIT LICENSE file at the repository root with standard MIT license text, current year, and placeholder author name (1 steps)

info: AgentSmith.Application.Commands.CommandExecutor[0]

GeneratePlanContext completed: Plan generated with 1 steps

info: AgentSmith.Application.Services.PipelineExecutor[0]

[5/9] GeneratePlanCommand completed: Plan generated with 1 steps

info: AgentSmith.Application.Services.PipelineExecutor[0]

[6/9] Executing ApprovalCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing ApprovalContext...

info: AgentSmith.Application.Commands.Handlers.ApprovalHandler[0]

Plan summary: Create a MIT LICENSE file at the repository root with standard MIT license text, current year, and placeholder author name

[1] Create: Create LICENSE file with standard MIT license text using current year (2024) and placeholder author name

info: AgentSmith.Application.Commands.Handlers.ApprovalHandler[0]

Headless mode: auto-approving plan

info: AgentSmith.Application.Commands.CommandExecutor[0]

ApprovalContext completed: Plan approved by user

info: AgentSmith.Application.Services.PipelineExecutor[0]

[6/9] ApprovalCommand completed: Plan approved by user

info: AgentSmith.Application.Services.PipelineExecutor[0]

[7/9] Executing AgenticExecuteCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing AgenticExecuteContext...

info: AgentSmith.Application.Commands.Handlers.AgenticExecuteHandler[0]

Executing plan with 1 steps...

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Running scout agent with model claude-haiku-4-5-20251001

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Scout agent starting file discovery with model claude-haiku-4-5-20251001

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Scout discovered 1 relevant files using 6276 tokens

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Agent completed after 4 iterations

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Token usage summary: 7978 input, 1110 output, 1564 cache-create, 4692 cache-read, Cache hit rate: 37.0 %, Iterations: 9

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Agentic execution completed with 1 file changes

info: AgentSmith.Infrastructure.Providers.Agent.ClaudeAgentProvider[0]

Token usage summary: 7978 input, 1110 output, 1564 cache-create, 4692 cache-read, Cache hit rate: 37.0 %, Iterations: 9

info: AgentSmith.Application.Commands.Handlers.AgenticExecuteHandler[0]

Agentic execution completed: 1 files changed

info: AgentSmith.Application.Commands.CommandExecutor[0]

AgenticExecuteContext completed: Agentic execution completed: 1 files changed

info: AgentSmith.Application.Services.PipelineExecutor[0]

[7/9] AgenticExecuteCommand completed: Agentic execution completed: 1 files changed

info: AgentSmith.Application.Services.PipelineExecutor[0]

[8/9] Executing TestCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing TestContext...

info: AgentSmith.Application.Commands.Handlers.TestHandler[0]

Running tests for 1 changes...

warn: AgentSmith.Application.Commands.Handlers.TestHandler[0]

No test framework detected, skipping tests

info: AgentSmith.Application.Commands.CommandExecutor[0]

TestContext completed: No test framework detected, skipping tests

info: AgentSmith.Application.Services.PipelineExecutor[0]

[8/9] TestCommand completed: No test framework detected, skipping tests

info: AgentSmith.Application.Services.PipelineExecutor[0]

[9/9] Executing CommitAndPRCommand...

info: AgentSmith.Application.Commands.CommandExecutor[0]

Executing CommitAndPRContext...

info: AgentSmith.Application.Commands.Handlers.CommitAndPRHandler[0]

Creating PR for ticket 54 with 1 changes...

info: AgentSmith.Infrastructure.Providers.Source.AzureReposSourceProvider[0]

Committed and pushed changes: fix: Add a LICENSE file with MIT license text (#54)

info: AgentSmith.Infrastructure.Providers.Source.AzureReposSourceProvider[0]

Pull request created: https://dev.azure.com/holgerleichsenring/agent-smith-test/_git/agent-smith-test/pullrequest/4

info: AgentSmith.Application.Commands.Handlers.CommitAndPRHandler[0]

Pull request created: https://dev.azure.com/holgerleichsenring/agent-smith-test/_git/agent-smith-test/pullrequest/4

info: AgentSmith.Application.Commands.Handlers.CommitAndPRHandler[0]

Ticket 54 closed with summary

info: AgentSmith.Application.Commands.CommandExecutor[0]

CommitAndPRContext completed: Pull request created: https://dev.azure.com/holgerleichsenring/agent-smith-test/_git/agent-smith-test/pullrequest/4

info: AgentSmith.Application.Services.PipelineExecutor[0]

[9/9] CommitAndPRCommand completed: Pull request created: https://dev.azure.com/holgerleichsenring/agent-smith-test/_git/agent-smith-test/pullrequest/4

info: AgentSmith.Application.Services.PipelineExecutor[0]

Pipeline completed successfully

info: AgentSmith.Application.UseCases.ProcessTicketUseCase[0]

Ticket 54 processed successfully: Pipeline completed successfully

Success: Pipeline completed successfullyAnd it worked like a charm.

What’s Next

Agent Smith currently works as a CLI tool and GitHub Action. Interactive chat interfaces for Slack, Teams, and other platforms are in progress. Agents that run as ephemeral containers on K8s, stream progress in real time, and ask you questions when they need clarification.

Have a look at the github repository.

[…] Original source: hackernews […]